Node-Based Interactive Music #music #audiovisual #web #javascript #p5js #tonejs #itp #the-code-of-music

While we were exchanging ideas of what we could do with Tone.js and p5.js, Henry Chen came up with this idea of a node-based system for interacting with music in a virtual 3D environment, in which moving one node could simultaneously affect multiple other nodes that affect the music. We agreed that creating a 2D version first could serve as a very good starting point.

We debated with ourselves how we should collaborate and ultimately decided that Henry would focus on sound (Tone.js) while I focus on visual (p5.js).

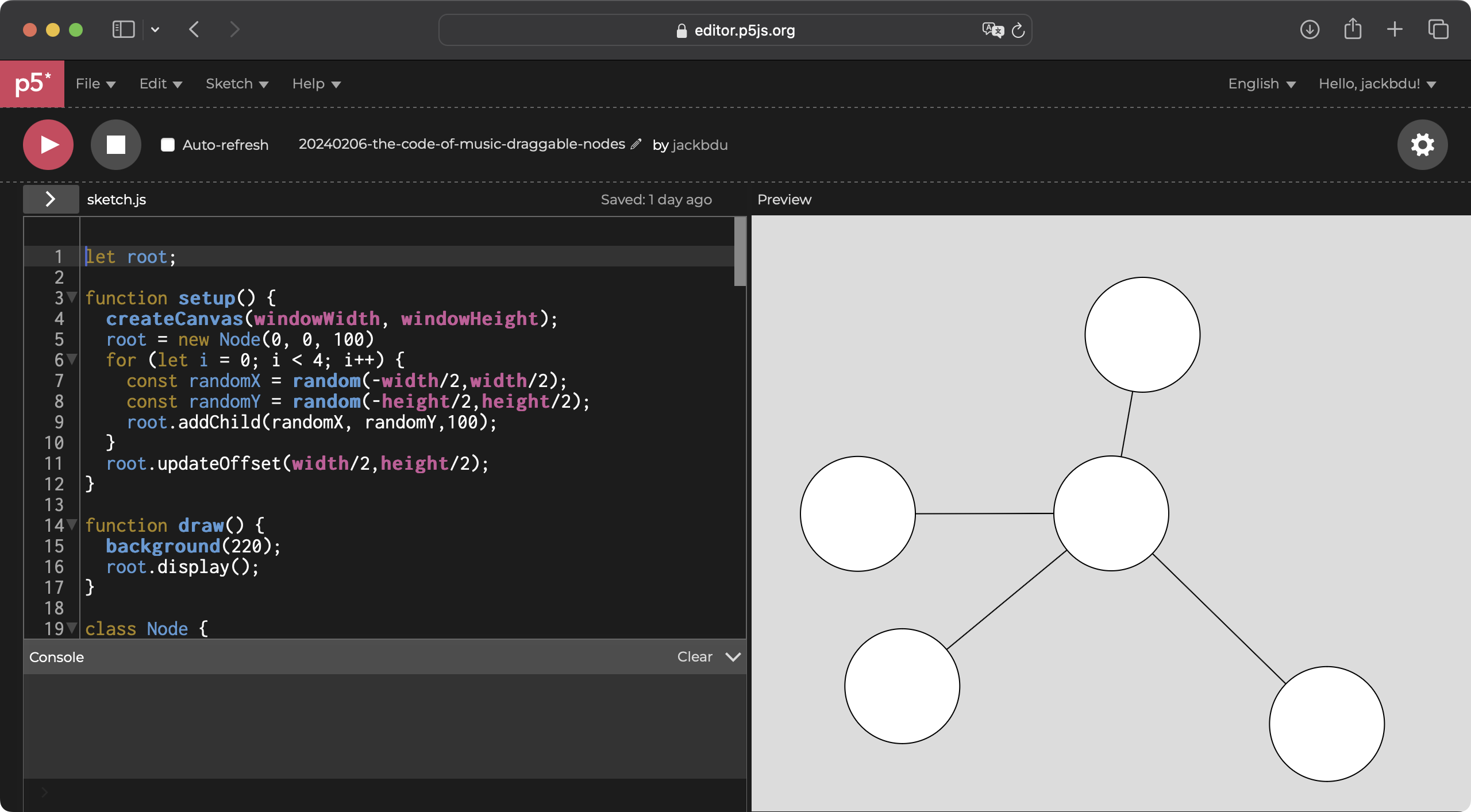

I started with implementing basic draggable nodes in p5.js. As I started working on making the nodes draggable, I debated if I should use HTML elements or even SVG graphics as nodes. It would be much easier to add event listeners to them to handle mouse interaction than implementing it in p5.js canvas. I eventually still decided to use p5.js canvas, because the canvas would allow me to add more interesting visuals later on and I thought it would be a fun challenge as well.

It did end up taking me quite some time in order to take into consideration all kinds of scenarios to avoid unexpected behavior, but the satifiscaiton of seeing it work perfectly was worth all the effort.

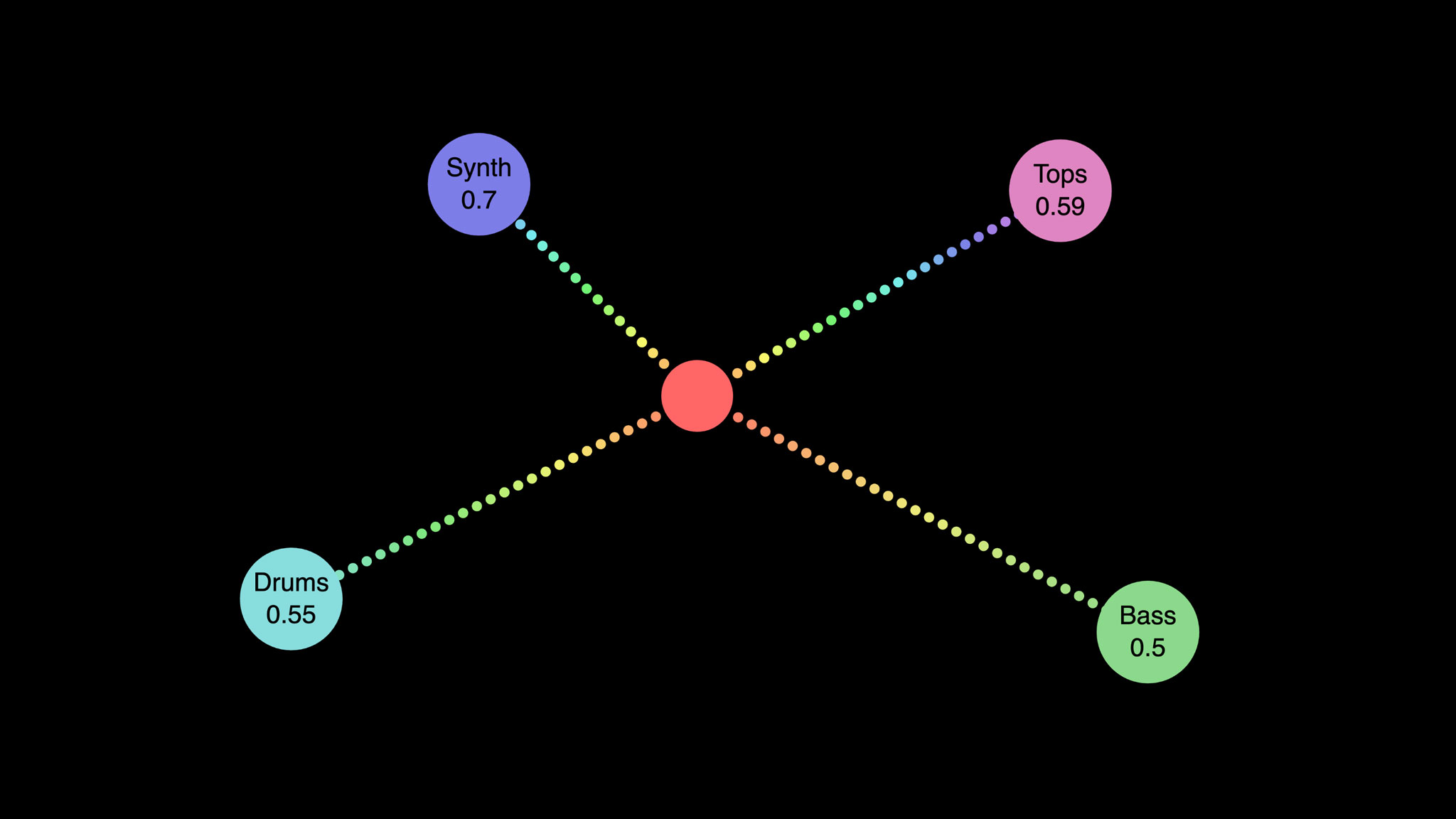

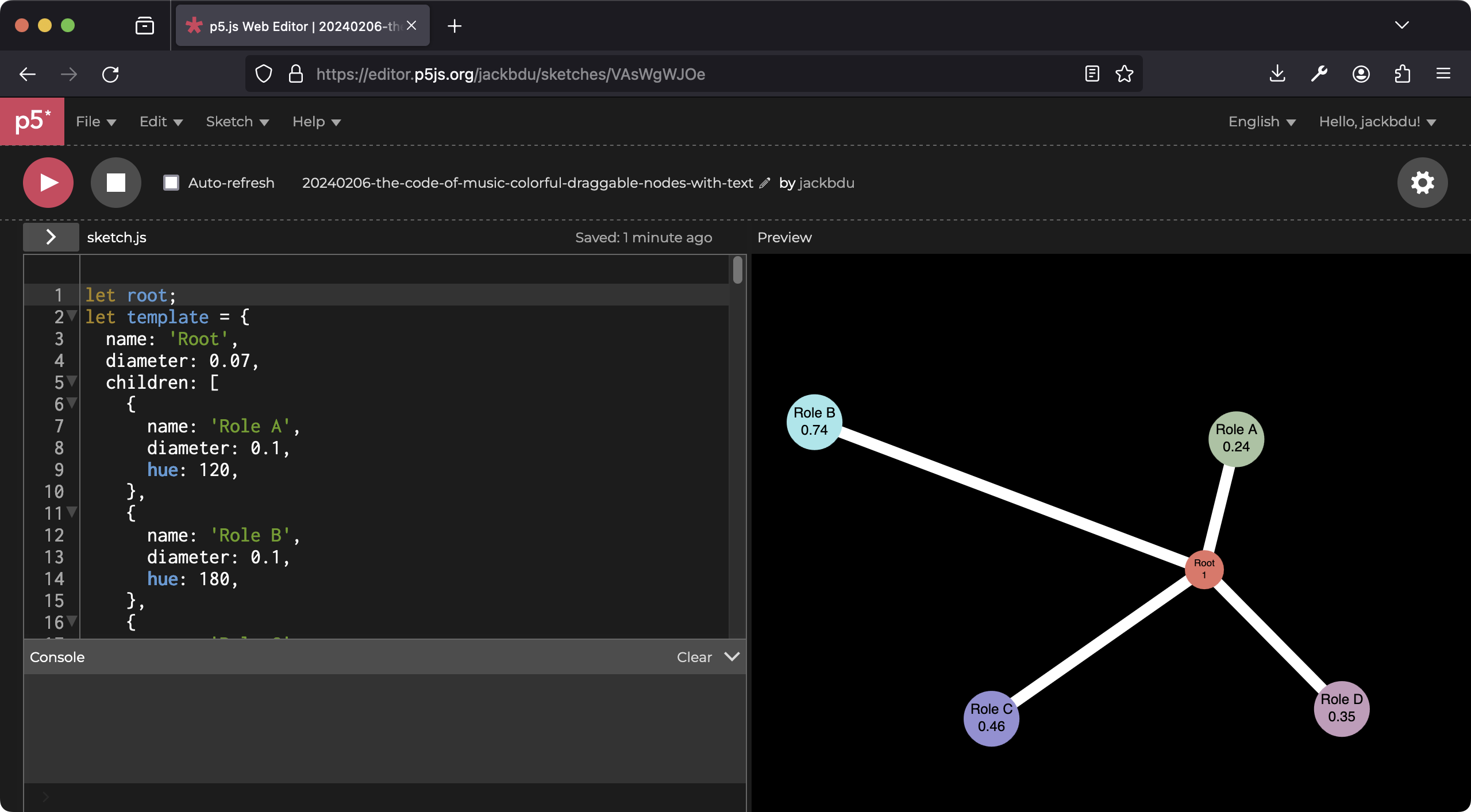

Next, I added text, indicating each node’s role as well as normalized value based on its distance to the parent node. Each node’s color also changes based on its value.

The next day, I spent a lot of time cleaning up the code so it is more organized and readable. I also experimented with a new style for the links between nodes. The links now consist of small circles filled with colors interpolating between colors of different nodes.

|

|

|---|

| Live demo of interactive nodes (Click and drag to interact). |

| [ View source code in p5.js Web Editor ] |

Finally, Henry focused on applying this node-based interactive system to control a loop, with distances between nodes affecting the lowpass filter applied to each branching node. You can check out his experiment on p5.js Web Editor.